AI for Prioritizing Product Features

AI for Prioritizing Product Features

AI is transforming how product teams decide what to build next. By analyzing feedback, user behavior, and business metrics, AI ensures decisions are based on data, not guesswork. Key benefits, as explored in our Product & Growth Insights, include:

- Better Decisions: AI connects user feedback to actual outcomes, like churn or revenue impact, prioritizing features that matter most.

- Faster Analysis: Tasks that used to take days, like tagging feedback or estimating effort, now take minutes.

- Unseen Insights: AI identifies patterns and themes in data that humans might miss, helping teams focus on overlooked user needs.

For example, companies like SAP SE and Fivetran have used AI to cut analysis time, improve accuracy, and validate feature investments with measurable results. AI also enhances prioritization frameworks like RICE and Kano, making them dynamic and data-driven. While AI speeds up and improves decision-making, human expertise remains crucial to ensure these insights lead to impactful products.

How to Build an Enterprise-Ready AI Prioritization Strategy

How AI Improves Feature Prioritization

AI takes the guesswork out of feature prioritization, turning what used to take days into a matter of minutes. By leveraging data, automating analysis, and uncovering hidden patterns, AI transforms how decisions are made at every step.

Moving from Guesswork to Data-Backed Decisions

Feature prioritization used to rely heavily on spreadsheets and manual tagging, often resulting in 50–60% consistency. AI changes the game by delivering over 80% accuracy, making decisions far more reliable when it comes to choosing which features to build.

For instance, SAP SE analyzed 8,757 user comments across 40 software products between January 2022 and November 2023. Using a multi-label classification model called SBERT, the AI achieved an impressive F1 score of 0.82 when categorizing complex feedback, such as "Licensing." This allowed SAP SE to validate whether specific investments actually reduced user complaints. Instead of relying on educated guesses, they had hard data to prove how their feature investments translated into real-world impact.

Saving Time in the Decision-Making Process

AI doesn’t just make decisions smarter; it makes them faster. By automating time-consuming tasks, teams can analyze feedback and act on it much more quickly, ensuring users get the features they need without delay.

Take Fivetran as an example. In January 2026, Shiva Mogili, their Director of Product Management, introduced an AI system that pulls data from tools like Zendesk, Jira, and Salesforce. This system generates automated "T-shirt sizing" effort estimates and feasibility reports within an hour of a new request. Mogili explains:

"The output is a complete report that includes a feasibility assessment, 'T-shirt sizing' effort estimates, API research findings with evidence..."

Similarly, Greyhound used AI in 2026 to unify feedback from post-ride surveys, station reports, and driver evaluations. What used to take days to analyze now only takes minutes, enabling station managers to address location-specific issues the same day they’re flagged.

Finding Patterns That Humans Overlook

AI doesn’t just speed up analysis - it also reveals insights that humans might miss. Using unsupervised machine learning techniques like LDA and NMF, AI groups related feedback into meaningful themes without requiring manual tagging. This makes it easier to spot recurring issues that might otherwise go unnoticed.

One SaaS company, for example, used Perspective AI to interview 48 customers and discovered that 67% prioritized time tracking for billing, a need they hadn’t previously considered. Acting on these AI-driven insights, they built new features that, over nine months, resulted in a 31% improvement in retention and a 28% boost in average revenue per user (ARPU). The data had always been there, but AI made the connections clear.

Companies using AI-powered theme discovery report an impressive 543% ROI over three years. Why? Because they’re building features that directly address user needs instead of chasing assumptions. AI ensures that decisions are grounded in what users actually want, not just what teams think they want.

Data Sources AI Analyzes for Prioritization

AI doesn’t decide which features to prioritize in isolation. Instead, it draws from a variety of data sources - both structured (like numerical metrics) and unstructured (like user feedback) - to form a well-rounded understanding of user needs and business objectives. These inputs lay the groundwork for deeper analysis in three key areas.

Customer Feedback and Text Responses

Using natural language processing (NLP), AI can sift through support tickets, survey responses, reviews, and feedback forms to uncover patterns and insights. It organizes feedback into themes, gauges sentiment, and pinpoints recurring issues. For instance, AI might flag common complaints like "confusing onboarding" or "slow performance", saving teams from manually combing through endless responses.

Platforms such as Modu make this process even easier. They collect private user feedback through text modules, visible only to admins, while also analyzing public suggestion boards. By examining both private and public inputs, AI captures not just what users are asking for but also how they’re interacting with existing features.

Product Usage Data

AI doesn’t just rely on what users say - it also looks at what they do. By analyzing behavioral metrics such as drop-off rates, activation funnels, and engagement levels, AI identifies where users might be struggling. For example, if a 15% drop in conversion rates occurs during a specific onboarding step, AI can cross-reference this with feedback like "the app is slow" to confirm the root cause. This connection between behavior and sentiment helps teams zero in on issues affecting a large portion of users.

Business Goals and Development Costs

Popularity alone isn’t enough to determine a feature’s priority. AI also considers its potential impact on revenue and the resources required to build it. By pulling data from CRM tools like Salesforce, AI can weigh requests based on factors like account size or Monthly Recurring Revenue (MRR), ensuring that high-value customers are addressed. Additionally, AI offers effort estimates, helping teams assess whether a feature is a quick fix or a complex, long-term project.

Prioritization Frameworks Enhanced by AI

AI is reshaping how teams approach prioritization frameworks by turning them into dynamic, data-driven tools. Instead of relying on static spreadsheets or manual guesswork, AI analyzes feedback, estimates effort, and predicts impact with precision, providing real-time insights that make decision-making faster and more accurate.

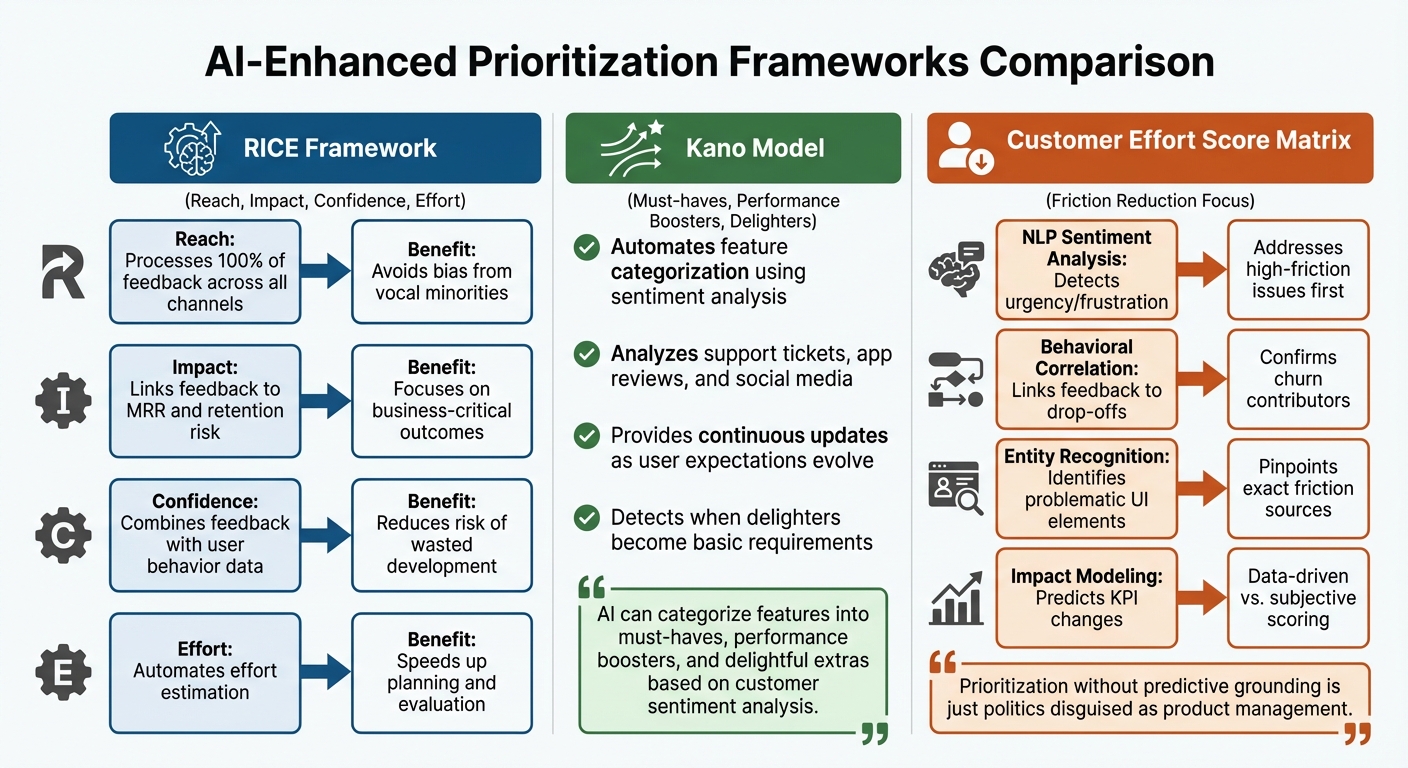

RICE Scoring with AI

RICE (Reach, Impact, Confidence, Effort) is a widely used framework for evaluating features based on their user reach, business value, certainty of success, and implementation effort. AI significantly improves each component by eliminating bias and reducing reliance on assumptions.

AI-powered tools continuously update RICE values as new data becomes available, making the framework more dynamic and reliable. For instance, AI evaluates all user feedback - not just the loudest opinions - when calculating Reach. It also connects feedback to metrics like Monthly Recurring Revenue (MRR) and retention risk to prioritize features that deliver measurable business value. Confidence scores benefit from AI’s ability to link qualitative feedback with user behavior, reducing the risk of developing features that go unused. For Effort, AI quickly generates automated estimates, streamlining technical evaluations.

| RICE Component | AI Enhancement | Benefit |

|---|---|---|

| Reach | Processes 100% of feedback across all channels | Avoids bias from vocal minorities |

| Impact | Links feedback to MRR and retention risk | Focuses on business-critical outcomes |

| Confidence | Combines feedback with user behavior data | Reduces risk of wasted development |

| Effort | Automates effort estimation | Speeds up planning and evaluation |

Kano Model with AI

The Kano Model categorizes product features into three groups: must-haves (basic expectations), performance boosters (features that proportionally increase satisfaction), and delighters (unexpected features that surprise and please users). Traditionally, teams rely on surveys to classify features, but AI automates this process by analyzing customer sentiment from diverse sources like support tickets, app reviews, and social media.

"AI can categorize features into must-haves, performance boosters, and delightful extras based on customer sentiment analysis." - Buildly [1]

AI offers continuous updates to the Kano Model, ensuring it reflects evolving user expectations. For example, a feature that once delighted users might now be considered a basic requirement. AI detects these shifts automatically, keeping your roadmap aligned with current user needs rather than outdated assumptions.

Customer Effort Score (CES) Matrix

The CES Matrix prioritizes features by focusing on how much friction they remove from the user experience. While traditional methods rely on manual feedback analysis, AI uncovers "invisible" friction points - issues users encounter but may not explicitly report. By examining behavioral data like usage patterns and activation funnels, AI identifies where users drop off and connects these behaviors to feedback.

For example, AI uses natural language processing (NLP) to detect frustrated or urgent tones in user comments, ensuring high-friction issues are addressed first. It also validates whether reported problems are causing churn by linking feedback to actual user behavior. Additionally, entity recognition pinpoints specific interface elements causing difficulties, while impact modeling predicts how resolving these issues will influence metrics like activation and retention.

"Prioritization without predictive grounding is just politics disguised as product management." - Figr.design [2]

| AI Method | Role in CES Matrix | Benefit |

|---|---|---|

| NLP Sentiment Analysis | Detects urgency or frustration in feedback | Addresses high-friction issues first |

| Behavioral Correlation | Links feedback to drop-offs | Confirms whether issues contribute to churn |

| Entity Recognition | Identifies problematic UI elements | Pinpoints exact sources of friction |

| Impact Modeling | Predicts changes in KPIs | Replaces subjective scoring with data-driven insights |

How Feedback Collection Supports AI Analysis

The accuracy of AI-driven prioritization hinges on how well feedback is collected and organized. If the data is incomplete or skewed, the resulting recommendations will miss the mark. A systematic approach to gathering user input across various channels and formats ensures a solid foundation for AI analysis, as we'll explore further in this piece.

Methods for Collecting Feedback

Different types of feedback provide distinct insights. For instance, quantitative data from ratings or polls delivers numerical inputs that models like RICE can process, while qualitative feedback adds depth, helping to identify user sentiment and emerging trends.

Modu simplifies this process with its diverse feedback modules:

- Rating Modules: Gather 1-5 scale scores to measure satisfaction.

- Suggestion Modules: Let users vote on feature requests, highlighting popular priorities.

- Text Modules: Collect open-ended feedback for deeper insights.

- Single/Multiple Choice Modules: Provide structured poll data for comparing preferences.

| Modu Module Type | Data Generated | AI Utility |

|---|---|---|

| Rating | Numeric satisfaction scores (1-5 scale) | Helps quantify sentiment for impact assessments |

| Suggestions | Feature requests with vote counts | Identifies high-demand features through user preferences |

| Text | Open-ended responses | Supplies qualitative data for NLP sentiment analysis |

| Single/Multiple Choice | Structured preference data | Tracks feature interest and supports A/B comparisons |

Structured feedback, like ratings and votes, feeds directly into prioritization algorithms, while unstructured inputs, such as text responses, are analyzed using Natural Language Processing (NLP). Together, these methods create a complete picture of user needs.

Using AI to Analyze Crowdsourced Priorities

Once feedback is collected and organized, AI steps in to uncover patterns that reflect real user demand and business value. This approach ensures the focus shifts from appeasing the loudest voices to addressing needs that genuinely impact users.

AI connects feedback data - like voting patterns and sentiment - with behavioral metrics such as activation and retention rates. For example, if a feature is frequently requested and data shows users abandon workflows due to its absence, AI flags it as a high-priority need. This method ensures that prioritization aligns with measurable outcomes rather than just vocal opinions.

When suggestion boards are used, AI analyzes vote distributions to differentiate between features with broad appeal and niche requests. It also identifies when multiple submissions describe the same need in different ways, consolidating them to reveal the true level of demand. This automated clustering prevents important features from being overlooked due to fragmented feedback.

Validating AI Prioritization Recommendations

When using AI to prioritize features or tasks, it's crucial to validate these recommendations to ensure they lead to meaningful business results. AI might highlight trends or rank features, but teams need to confirm that these suggestions translate into measurable outcomes before allocating resources. This process bridges the gap between AI-generated insights and tangible success, ensuring that promising data-backed ideas deliver real-world value.

Understanding How AI Reaches Its Recommendations

To start, it's important to trace AI's recommendations back to the data that informed them. For example, when AI identifies a feature as high-priority, it should be able to link that decision to specific inputs like user feedback, behavioral patterns, or revenue metrics.

One effective way to validate these recommendations is through behavioral correlation. If AI flags "confusing onboarding" as a major issue based on user feedback, teams should check if this aligns with quantitative data, such as high churn rates during the onboarding phase. For instance, in January 2026, Motel Rocks used Zendesk Copilot to perform sentiment analysis and uncover recurring customer frustrations. By cross-referencing these patterns with support ticket volumes, they were able to address critical pain points, boosting customer satisfaction (CSAT) by 9.44% and reducing ticket volume by half.

Another method is MRR impact sorting, which prioritizes feature requests based on the revenue generated by the customers making those requests. This prevents smaller, less impactful voices from overshadowing high-value accounts. A great example comes from 2022 when Jesse Sandala, Director of Product at GiveButter, validated a highly requested "Auctions" feature. Instead of relying solely on popularity (600+ votes), the team assigned effort scores and strategic importance values to ensure alignment with business goals. The result? A successful launch with engagement levels that matched their predictions.

It's also essential to involve engineering teams early in the process to assess feasibility and resource requirements. AI might recommend a feature based on demand, but if the development effort outweighs the potential impact, it may not be worth pursuing. In January 2026, Fivetran's Director of Product Management, Shiva Mogili, implemented an AI-powered system that integrated data from Zendesk, Jira, and Salesforce into BigQuery. This system generated automated "T-shirt sizing" effort estimates and feasibility reports within an hour of receiving feature requests. This allowed the team to evaluate technical feasibility upfront and make informed roadmap decisions.

Once insights are validated, teams can simulate potential real-world outcomes to ensure AI predictions align with measurable return on investment (ROI).

Testing Scenarios and Estimating ROI

AI can help answer strategic questions like, "Which features are likely to turn struggling users into power users?" or "How much could fixing this onboarding issue reduce churn?"

A great way to validate AI recommendations is through A/B testing. Instead of fully building out a feature, release a limited version to a smaller user group and measure its impact on metrics like conversion rates, retention, or support ticket volume. This approach minimizes risk and confirms whether the AI's predictions hold up in practice.

AI can also generate predictive simulations by analyzing historical user behavior and market trends. By examining how similar features performed in the past, AI can forecast adoption rates and usage patterns. This helps teams focus on features with the best chance of success while avoiding ideas that might fail to gain traction.

| AI Validation Method | Function | Business Alignment Benefit |

|---|---|---|

| Behavioral Correlation | Links feedback to usage metrics | Confirms if issues like churn tie to specific complaints. |

| MRR Impact Sorting | Ranks requests by customer revenue | Ensures focus on features that drive growth. |

| Effort Scoring | Estimates developer hours (T-shirt sizing) | Avoids wasting resources on low-impact, high-effort tasks. |

| Segment Analysis | Compares power users vs. trial users | Helps decide whether to prioritize retention or acquisition. |

Conclusion

AI transforms subjective decision-making into a streamlined, data-focused process, helping teams save time and achieve better results. Instead of relying solely on personal opinions, teams can evaluate feedback patterns, usage data, and revenue impact to make well-supported choices. This approach minimizes wasted effort and ensures resources are directed toward features that truly make a difference. By doing so, AI lays the groundwork for integrating meaningful user feedback into product development.

For AI to deliver precise prioritization, structured feedback is key. Tools like Modu make it easier to collect high-quality user input, leveraging AI clustering to identify recurring themes and convert feedback into actionable priorities.

AI can process thousands of feedback submissions in just minutes. It validates its recommendations by connecting sentiment analysis with behavioral data, ensuring that popular requests align with what users actually need. This speeds up decision-making while maintaining accuracy. However, these insights still need human expertise to interpret and act on effectively.

It’s important to note that AI doesn’t replace human judgment - it enhances it. Teams still need to validate insights, evaluate feasibility, and consider strategic trade-offs. AI’s role is to uncover insights faster, while product teams use their expertise to turn those insights into features that boost retention, user satisfaction, and growth.

AI-powered prioritization creates a powerful feedback loop: users feel heard, teams make confident decisions, and products continue to improve. By combining AI-driven insights with human expertise, businesses can foster a cycle of continuous innovation and progress.

FAQs

What data do I need for AI feature prioritization?

To effectively prioritize product features with AI, you need to gather both structured and unstructured feedback. This includes sources like:

- Support tickets

- App reviews

- Social media mentions

- Surveys

- Ratings

- User suggestions

AI tools use techniques like Natural Language Processing (NLP) to analyze this data, uncovering themes, sentiment, and trends that might otherwise go unnoticed. The key here is to work with clean and enriched data. This means removing duplicate feedback, incorporating user context, and factoring in engagement metrics. When done right, this process helps create accurate, data-backed rankings that align with both user needs and your business objectives.

How can I trust AI recommendations for my roadmap?

AI suggestions for your roadmap leverage tools like Natural Language Processing (NLP), sentiment analysis, and pattern recognition to dig into customer feedback, usage patterns, and market trends. These techniques help prioritize features based on factors like user demand, potential business value, and the effort required to implement them.

While AI delivers recommendations grounded in data, its accuracy hinges on the quality of the input and the strength of the algorithms. To make the best decisions, it's essential to pair AI-driven insights with human expertise and consistently validate the results. This combination helps create more balanced and dependable choices.

How do I combine AI scores with RICE or Kano?

To blend AI scores with the RICE or Kano frameworks, start by leveraging AI to analyze customer feedback. AI can assign scores based on factors like demand, sentiment, and business impact.

For the RICE framework, incorporate these AI-generated scores into the four key components:

- Reach: Use AI insights to estimate how many users a feature or initiative will impact.

- Impact: Factor in AI-driven sentiment analysis to gauge how much value the feature adds.

- Confidence: Rely on AI's data-backed analysis to strengthen confidence in your estimates.

- Effort: Use AI to evaluate the resources required, making effort estimates more precise.

For the Kano model, AI insights can help classify features into categories like Must-Have, Performance, or Excitement. By analyzing feedback trends and customer preferences, AI ensures these classifications are grounded in data, making prioritization more objective and actionable.