AI in Open-Ended Feedback Analysis

AI in Open-Ended Feedback Analysis

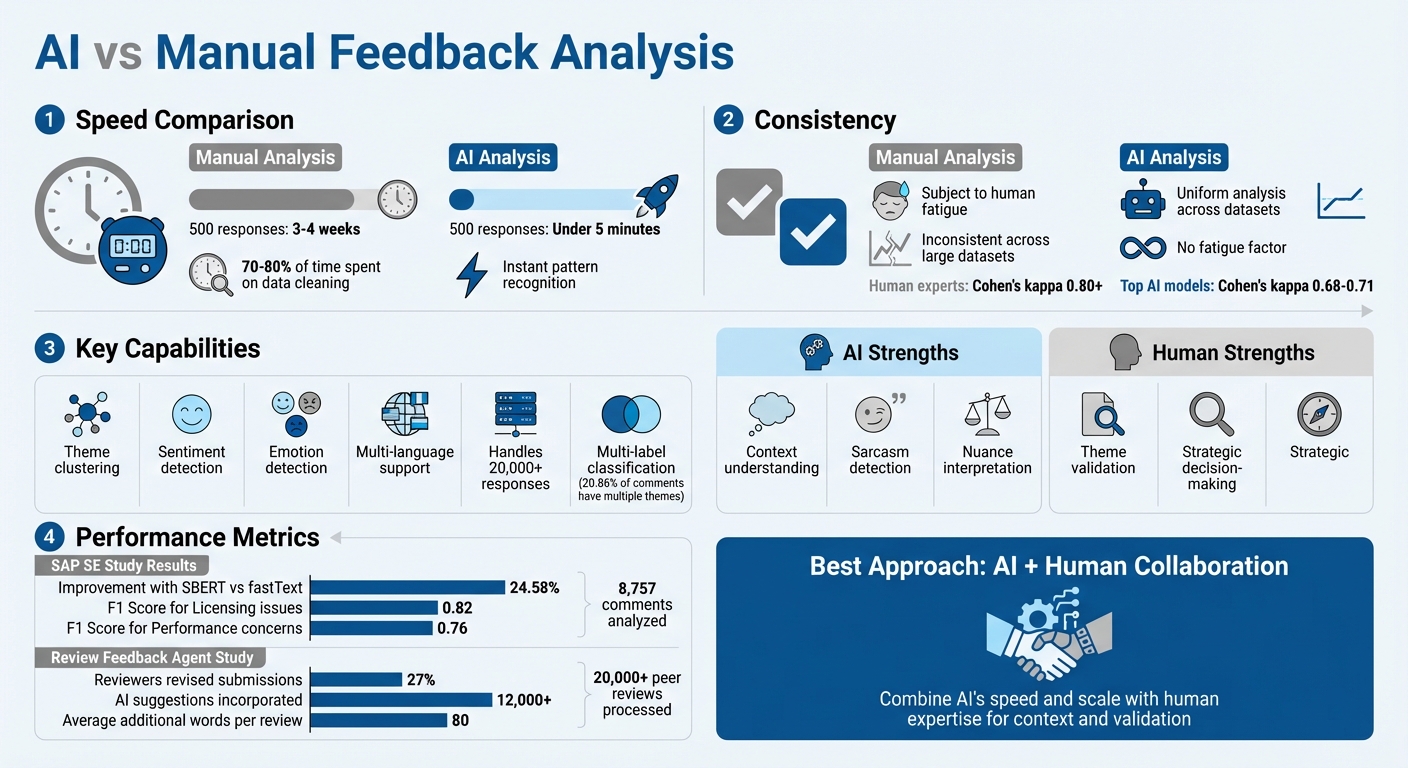

AI tools are changing how companies analyze open-ended feedback. Traditional methods of manually reviewing responses are time-consuming and inconsistent, often taking weeks to process even a few hundred comments. AI, on the other hand, can analyze thousands of responses in minutes, offering faster and more reliable insights.

Key takeaways:

- Speed: AI processes 500 responses in under 5 minutes, compared to 3–4 weeks manually.

- Consistency: Unlike humans, AI doesn’t experience fatigue, ensuring uniform analysis across large datasets.

- Insights: AI identifies patterns, themes, and emotions, helping teams understand user feedback on a deeper level.

AI techniques like theme clustering and sentiment analysis make it possible to organize feedback into actionable categories. However, combining AI with human review ensures context and nuance are not lost. Platforms like Modu integrate these capabilities with tools like Slack and Jira, turning feedback into tasks and decisions quickly and efficiently.

Build a Customer Feedback Analyzer

AI Techniques for Analyzing Feedback

AI excels at identifying patterns, detecting emotions, and organizing comments into meaningful categories. These capabilities help transform overwhelming volumes of feedback into structured, actionable insights.

Automated Theme Clustering

Natural Language Processing (NLP) drives theme clustering by scanning text, identifying keywords, and grouping responses based on meaning and intent. This approach ensures that related terms, including synonyms and industry-specific jargon (like "pricing", "usability", or "support"), are grouped together seamlessly [7][5][10]. For instance, even if a comment doesn’t explicitly mention keywords, such as "I kept waiting, but no update came", the system can intuitively classify it under a theme like "Poor Communication" [10].

A compelling example comes from SAP SE’s long-term UX measurement project, conducted between January 2022 and November 2023. This initiative analyzed 8,757 manually labeled user comments from 40 software products on the SAP Business Technology Platform (BTP). Researchers Sandra Loop and Erik Bertram employed a multi-label classification model using SBERT embeddings and the extreme gradient boosting algorithm. By transitioning from fastText to the SBERT (Transformer) architecture, they improved the micro-averaged F1 score for categorizing user feedback by 24.58%. The model performed particularly well in identifying trends, achieving an F1 score of 0.82 for "Licensing" issues and 0.76 for "Performance" concerns [8].

One standout feature of advanced models is their ability to handle feedback with multiple themes. SAP’s dataset revealed that 20.86% of user comments included multiple topic labels, highlighting the need for AI capable of managing overlapping sentiments [8]. For example, a user might praise one feature while criticizing another. This multi-label approach helps organizations gain a nuanced understanding of user feedback, enabling faster transitions from raw data to strategic decisions.

While theme clustering organizes feedback, sentiment analysis adds another layer by uncovering the emotional tone behind user comments.

Sentiment and Emotion Detection

AI tools classify feedback as positive, negative, or neutral by analyzing language patterns. More advanced systems go beyond basic sentiment to detect emotions like joy, anger, frustration, and even sarcasm or subtle linguistic nuances [7][5]. As TGM Research points out:

"NLP-enabled AI surveys can effectively analyze open-ended responses, interpreting user sentiments–even sarcasm–and the subtle nuances of language" [7].

Aspect-Based Sentiment Analysis (ABSA) takes this a step further by breaking down sentiments by topic. For instance, a comment might reflect satisfaction with functionality but dissatisfaction with pricing, and ABSA tags these sentiments individually [7][5].

However, sentiment analysis has its limitations. Research at SAP SE found that sentiment scores alone don’t reliably capture overall product satisfaction. Sandra Loop, a researcher at SAP SE, explains:

"Sentiment analysis alone does not reliably reflect user satisfaction. Instead, product satisfaction needs to be assessed explicitly in surveys to measure the user's perception of the product" [8].

For example, a user might express frustration with a minor issue but still report high overall satisfaction in survey ratings. Combining sentiment detection with explicit satisfaction metrics - and incorporating human judgment - provides a more comprehensive understanding of user feedback.

Combining AI with Human Expertise

AI can process thousands of responses in seconds, but it takes human insight to truly capture context and nuance. By pairing AI's speed and analytical power with human review, organizations can ensure that insights are not just fast but also relevant and actionable.

Validating AI-Generated Themes

Human validation plays a critical role in refining AI-identified patterns to ensure they align with real-world business needs. While AI is excellent at spotting recurring keywords and grouping similar responses, it often misses the finer details that only humans can catch. Rewina Bedemariam and colleagues highlight this balance:

"While LLM-as-judge offer a scalable solution comparable to human raters, humans may still excel at detecting subtle, context-specific nuances" [11].

Without human oversight, AI outputs can drift away from the true essence of feedback, leading to flawed conclusions.

For example, a study involving 2,784 participants revealed that individuals who were skeptical of AI were better at catching errors. This underscores the importance of combining robust algorithm performance with critical human review [14]. However, organizations must structure workflows to encourage thoughtful analysis rather than passive approval. High review workloads can lead to fatigue, increasing the risk of oversights and errors [14]. Providing transparent explanations - like showing why AI grouped certain responses together - empowers reviewers to make more informed decisions [13].

Using AI and Human Input for Better Decisions

Once themes are validated, combining human inquiry with AI insights can lead to better decision-making. The most valuable insights come from an iterative process where AI surfaces trends automatically, and humans refine those patterns into actionable strategies. For instance, if AI identifies "equipment concerns" as a recurring theme, a human reviewer might dig deeper by asking, "What specific equipment issues are employees highlighting?" This back-and-forth approach helps translate raw data into practical business actions [4].

Collaboration between humans and AI also helps mitigate biases that AI might introduce. For example, AI chatbots used to code open-ended responses can sometimes overestimate false positives, especially when respondents are overly agreeable. Human reviewers step in to apply context and correct such errors [12]. As Lixiang Yan and colleagues point out, concerns about AI's:

"trustworthiness and accuracy, reliability and consistency, limited contextual understanding"

persist, reinforcing the need for human oversight [13]. Together, AI and human expertise create a system that is both efficient and trustworthy.

Research Findings and Performance Data

Recent Research on AI Feedback Tools

AI systems are proving their worth in handling feedback volumes that would otherwise overwhelm human teams. Take the "Review Feedback Agent", for example. In April 2025, researchers Nitya Thakkar and James Zou introduced this tool at the ICLR conference to process over 20,000 peer reviews. The results? The system generated professional feedback suggestions that led 27% of reviewers to revise their original submissions. In total, over 12,000 AI-generated suggestions were incorporated, with reviews gaining an average of 80 additional words. These changes made the feedback more detailed and actionable [15].

Beyond academic settings, industry projects also highlight AI's capabilities. For instance, SAP SE successfully used AI to track feedback trends across multiple product categories. This approach far outpaced what manual reviews could achieve in terms of both efficiency and scale [8].

Measuring AI Tool Performance

AI isn't just about handling large volumes of data - its performance metrics are also worth noting. On the accuracy front, AI tools show promise but still fall short of matching human expertise. For example, the "Muse" AI qualitative assistant achieved a Cohen's kappa of 0.71 when aligning with human experts on well-defined codes, reflecting substantial agreement [17]. However, in a classification study of learner responses, the top-performing model, DeepSeek-V3, reached a kappa of only 0.68, while other models ranged from 0.37 to 0.61 [16]. For comparison, human experts typically achieve kappa scores of 0.80 or higher, indicating stronger reliability.

Where AI truly shines is in processing speed and scalability. Transformer-based models like SBERT improved classification performance by 24.58% over older models like fastText, enabling them to handle massive datasets that would be unmanageable for humans [8]. One study, for instance, analyzed nearly 894,000 product reviews, extracting over one million aspect-sentiment pairs. Completing this task manually would take human teams months, if not years [9]. However, experts emphasize the importance of oversight when using generative AI for qualitative analysis:

"the necessity of expert oversight when integrating GenAI as a support tool in qualitative data analysis" [16].

How Modu Uses AI for Feedback Analysis

AI Clustering and Trend Detection in Modu

Modu processes private, open-ended feedback from surveys, bug reports, and customer comments using AI-powered thematic coding and clustering. This technology identifies recurring themes by organizing feedback into categories such as "product quality", "pricing", or "staff friendliness." Interestingly, a single response can be tagged with multiple relevant themes, giving a more nuanced understanding of customer input [18][19]. On top of that, Modu employs sentiment and emotion detection to classify responses as positive, negative, or neutral. It even distinguishes between minor complaints and more serious dissatisfaction, helping teams focus on what matters most [18].

Team Collaboration and Integration Tools

Once themes are identified, Modu transforms these insights into actionable metrics. By converting open-ended comments into ranked themes and sentiment charts, the platform highlights key patterns and trends at a glance [19]. This makes it easier for teams to zero in on frequently mentioned topics and understand the emotional tone behind the feedback. To streamline workflows, Modu integrates with tools like Slack, Jira, Trello, Linear, and ClickUp, allowing teams to create tasks or notifications for urgent issues directly from the platform. Additionally, data can be exported to Google Sheets for stakeholder reviews. This seamless combination of AI analysis and workflow tools ensures that feedback doesn't just sit idle - it becomes a catalyst for action.

Key Takeaways

AI has revolutionized the way we analyze open-ended feedback. What used to take analysts 3–4 weeks - manually coding 500 responses - can now be done in under 5 minutes [1][2]. This shift eliminates the time-consuming bottleneck where analysts spent 70–80% of their time cleaning and organizing data before diving into meaningful analysis [20].

Today's AI tools go beyond basic processing. They can detect sentiment, tone, and urgency, while clustering responses into clear themes [3][4][5]. They even handle feedback in multiple languages simultaneously, uncovering patterns that traditional multiple-choice surveys often miss [5][6].

This progress paves the way for a hybrid approach, blending AI's efficiency with human expertise. AI takes care of intensive tasks like pattern recognition and auto-coding, while human researchers step in to validate themes, interpret subtleties like sarcasm, and shape the insights into actionable strategies [21]. Together, this partnership transforms raw data into strategic decisions.

Platforms like Modu showcase this in action. They take open-ended comments, turn them into ranked themes and sentiment charts, and seamlessly integrate with tools like Slack, Jira, and Linear. This integration allows teams to create tasks for urgent issues directly from the insights. The result? A streamlined feedback loop - from collection to actionable insights - without the weeks of manual effort that used to make large-scale qualitative analysis a daunting task [19].

FAQs

When should humans review AI-coded feedback?

Humans should step in to review AI-generated feedback when there's a need to evaluate its accuracy, context, or possible bias. This becomes particularly crucial in complex or sensitive situations where a deeper, more nuanced understanding is essential.

How accurate is AI at detecting sentiment and sarcasm?

AI's ability to detect sarcasm has shown improvement, but it’s far from perfect. For instance, fine-tuned models such as GPT-3 can hit an accuracy of around 81%, while zero-shot GPT-4 models perform slightly lower at about 71%. However, sarcasm often hinges on subtle social cues and context - areas where AI still struggles to grasp the full picture.

How does Modu turn feedback themes into team tasks?

Modu simplifies the process of transforming open-ended feedback into actionable tasks. By identifying recurring themes in feedback, the platform organizes them into clear, manageable items. Users can group feedback into categories, set priorities for key themes, and work together on tasks - all within Modu. With tools like boards, AI-powered trend analysis, and team collaboration features, it makes managing feedback and turning it into action much more efficient.